Employee Activity Monitoring Risks: Digital Evidence Challenges in Workplace Investigations

Digital activity dashboards can suggest consistent engagement — but without validation across systems, they may reflect signals that are incomplete, misleading, or artificially generated.

Digital activity is often treated as evidence.

Status indicators, login duration, and system activity are routinely used to assess productivity and support workplace decisions.

But these signals can be misleading.

Tools that simulate activity — including mouse movers and automated inputs — create a gap between what systems show and what actually occurred.

In a workplace investigation, that gap matters.

Because:

When indicators are mistaken for evidence, decisions become difficult to defend.

This article explores where digital activity signals fail, how simulated activity distorts findings, and how organizations can apply structured investigative thinking to reach defensible conclusions.

When “Activity” Can Be Manufactured

In today’s workplace, digital activity is often treated as a proxy for productivity.

Status indicators.

Mouse movement.

Login duration.

These signals are embedded in dashboards, referenced in performance conversations, and increasingly relied upon in workplace investigations.

But they share a critical limitation:

Mouse movers — both physical devices and software-based tools — simulate activity without actual engagement. While often dismissed as a minor workaround, their presence introduces a much more significant issue:

The integrity of digital evidence.

The Gap Leaders Sense — But Struggle to Prove

Many executives and HR leaders have experienced it:

A sense that something isn’t aligning.

That activity appears consistent — but outcomes don’t.

This is the gap between:

System-generated signals

And actual human behavior

When that gap isn’t examined, assumptions fill it.

And in an investigative context:

Why Surface-Level Signals Fail

Surface-level indicators — such as status icons or login duration — are:

Easily manipulated

Lacking context

Insufficient on their own

Yet they are often treated as conclusions.

In reality:

They are indicators — not evidence.

This distinction is where many workplace investigations begin to break down.

From Indicators to Evidence: Applying Investigative Logic

Proving artificial activity is not about identifying a single moment.

It is about establishing a pattern that holds under scrutiny.

That requires a structured approach grounded in three principles:

1. Correlation Across Independent Sources

No single system should stand alone.

Defensible findings look for alignment across:

System activity

Output or deliverables

Platform usage patterns

The question is not:

“What does this system show?”

But:

“Do multiple systems tell the same story?”

2. Pattern Analysis Over Time

Isolated anomalies prove very little.

However, repeated patterns — particularly those that appear:

Mechanically consistent

Perfectly timed

Misaligned with human behavior

Begin to shift the analysis from suspicion to insight.

3. Contextual Validation

Even strong patterns require context.

Because:

Not all inconsistencies are intentional

Not all anomalies indicate misconduct

Defensible investigations validate:

Role expectations

Workflow realities

Environmental or operational factors

Before conclusions are drawn.

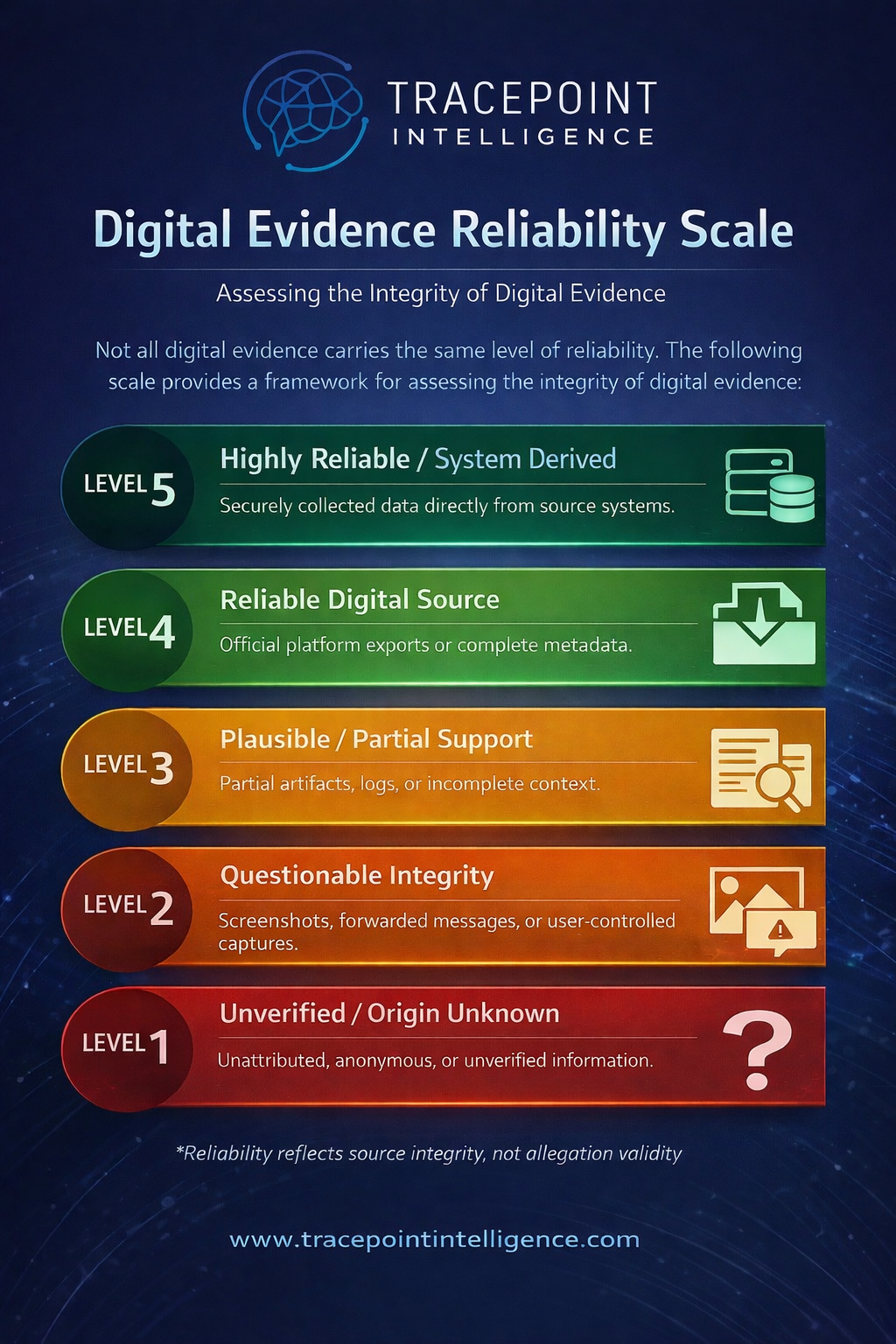

Introducing the Digital Evidence Reliability Scale

At the center of this challenge is a simple truth:

Not all digital evidence carries equal weight.

The Tracepoint Digital Evidence Reliability Scale provides a structured framework to assess:

The strength of different evidence types

Their defensibility in decision-making

In cases involving simulated activity:

Status indicators → Low reliability

Login duration → Low to Moderate

Cross-system correlation → High

Validated behavioral patterns → High

This framework helps organizations move from:

Signals → Insight → Defensible conclusions

Not all digital evidence carries equal weight — and without a structured way to assess it, conclusions can quickly become unreliable. The scale below provides a practical framework for evaluating evidentiary strength in workplace investigations.

The Tracepoint Intelligence Digital Evidence Reliability Scale categorizes digital evidence from unverified inputs to system-derived data, helping organizations assess reliability and support defensible investigative outcomes.

The Leadership Dimension

This issue extends beyond investigation.

It sits at the intersection of:

Evidence

Decision-making

Organizational trust

Over-reliance on weak signals can:

Undermine credibility

Lead to premature or unsupported decisions

Drive unintended behaviors within teams

Strong leadership does not rely on more data.

It relies on better interpretation of the right data.

The goal is not to monitor activity — but to understand behavior.

What Defensible Organizations Do Differently

Organizations that navigate this well take a deliberate approach:

They treat surface-level signals as directional, not definitive

They validate findings across multiple independent sources

They analyze patterns over time, not isolated snapshots

They document how conclusions were reached

And when necessary, they are willing to pause:

“We don’t have enough evidence yet.”

That restraint is not a weakness.

It is what protects the outcome.

The Real Risk

The risk is not simply that activity can be simulated.

It is that organizations may:

Act on incomplete evidence

Be unable to substantiate decisions

Face legal or reputational scrutiny as a result

Because ultimately:

An investigation is only as strong as the evidence behind it — and the discipline used to interpret it.

Final Thought

As digital work environments evolve, so do the methods used to simulate activity.

The organizations that manage this effectively are not those with the most visibility.

They are the ones with:

Structured investigative thinking

Clear evidence standards

Tracepoint Intelligence provides structured, defensible investigative support across Canada and the United States — specializing in digital evidence, workplace investigations, and complex organizational risk.

If your organization is navigating ambiguous or high-risk digital evidence:

Engage Tracepoint → Begin an Engagement